The Structural Limits of AI: Why Algorithms Can’t Lead

As leaders incorporate AI into their organizations, it is crucial to understand a key distinction: AI is a tool, while leadership is a responsibility. Misusing AI can result in risks such as eroding trust, evading accountability, and delegating decision-making, ultimately shifting the burden back onto leadership. The issue is not whether AI is powerful; it certainly is. The real question is: when does having power without accountability become a liability?

AI can accelerate thinking, but it cannot own consequences. That distinction defines the boundary.

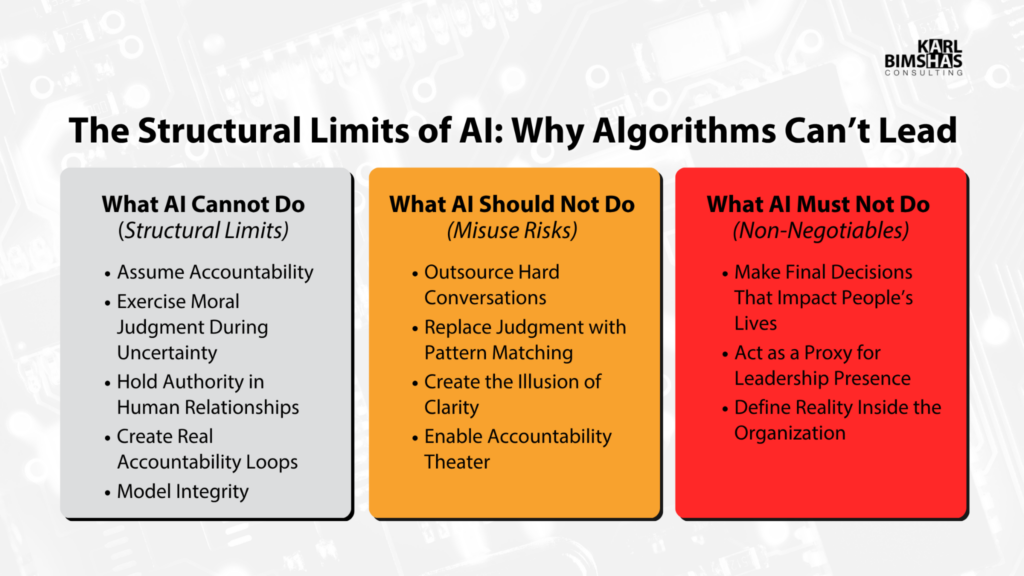

What AI Cannot Do: The Structural Limits

These are not temporary gaps; they are inherent to the technology.

1. Assume Accountability

AI does not carry consequences. It can recommend a reorganization, suggest a termination, or produce a complex strategy, but it cannot:

- Face the employee afterward.

- Absorb reputational risk.

- Live with second-order effects.

Accountability is a hallmark of effective leadership. AI is structurally incapable of accountability.

The Implication: Leaders must own decisions; AI can only inform.

2. Exercise Moral Judgment During Uncertainty

AI can simulate ethics, but it cannot bear them. Effective leadership decisions involve competing values, incomplete information, and human cost. No dataset can resolve decisions in which every option carries a cost. Leadership does. In other words, AI cannot say, “This is the right call when every available option hurts people.” Leaders must retain that obligation.

The Implication: AI can outline trade-offs; it cannot decide what is “right.”

3. Hold Relational Authority

Leadership is relational. Authority requires trust built over time, credibility under pressure, and a visible consistency between words and actions. AI has no skin in the game, no history, and no presence.

The Implication: Only humans can lead; AI can aid communication.

4. Create Real Accountability Loops

Effective leaders focus on installed accountability, not performative check-ins. AI can track tasks and send reminders, but it cannot confront avoidance, challenge rationalization, or enforce standards when the situation becomes uncomfortable.

The Implication: Only leaders enforce accountability; AI monitors, but cannot compel action.

5. Model Integrity

You can observe integrity across time, specifically under pressure. AI does not experience pressure, make sacrifices, or choose the “hard right” over the “easy wrong.” It produces outputs; it does not live with the consequences.

The Implication: Leaders demonstrate integrity; AI can only articulate it.

What AI Should Not Do: The Misuse Risks

Using AI to avoid responsibility is a form of leadership avoidance.

1. Outsourcing Hard Conversations

Using AI to write performance warnings, deliver layoffs, or mediate conflict is an act of avoidance in leadership.

The Cost: Rapid erosion of confidence and credibility.

2. Replacing Judgment with Pattern Matching

AI is excellent at “what usually works,” but leadership often requires doing what doesn’t usually work, challenging patterns intentionally.

The Cost: Safe, mediocre decisions that fail in unique contexts.

3. The Illusion of Clarity

Leaders often mistake clean, AI-generated language for clear thinking or strategy.

The Cost: Well-written confusion.

4. Accountability Theater

Dashboards and automated reports create the appearance of control. Without human enforcement, they are just noise.

The Cost: sustained activity without measurable progress.

The Non-Negotiables

If leaders yield here, failure becomes an ethical matter. AI must never:

- Make Final Decisions Impacting Lives: Hiring, firing, compensation, and disciplinary actions must remain human-centric.

- Act as a Proxy for Presence: Presence is intentionally non-scalable. AI-led performance reviews or “check-ins” are a poor substitute for human engagement.

- Define Your Reality: If you rely on AI summaries instead of direct observation and ground truth, you lose control of your system. AI becomes a filter, then a distortion.

The Bottom Line: AI as Amplifier, Not Operator

Failure is usually a systems problem: a lack of clarity, consistency, or real accountability.

AI can strengthen your system if the leader remains in control:

- Increase Clarity through synthesis and pattern recognition.

- Support Consistency using better structure and reminders.

- Strengthen Visibility through data tracking and diagnostics.

Relying on AI to take over ownership or avoid discomfort leads to quick failure. Leadership is characterized by ownership and accountability, not by intelligence, speed, or productivity. When ownership is minimized, leadership disappears. No system can make up for that.

“A computer can never be help accountble, therefore a computer must never make a management decision.”

Machines do not have moral agency, consciousness, or intent. Accountability is still a human responsibility.

The AI Leadership Integrity Assessment

Instructions: Answer ‘Yes’ or ‘No’ to each question.

| Section 1: Structural Ownership (Non-Delegable Responsibilities) | YES | NO |

| 1. Accountability: Do you personally own the outcome of AI-generated plans? | ❑ | ❑ |

| 2. Moral Judgment: Do you make the final call on ethical dilemmas? | ❑ | ❑ |

| 3. Relational Authority: Do you build trust through presence, not just summaries? | ❑ | ❑ |

| 4. Integrity: Do you take visible responsibility for failures without deflecting to data, AI, or context? | ❑ | ❑ |

| Section 2: Misuse Risks (The Temptations) | YES | NO |

| 5. Hard Conversations: Do you deliver all sensitive decisions directly and in real time, without delegating to AI or asynchronous tools? | ❑ | ❑ |

| 6. Judgment vs. Patterns: Do you intentionally override AI when human context differs? | ❑ | ❑ |

| 7. The Counter-Argument: Do you actively seek reasons why the AI might be wrong? | ❑ | ❑ |

| 8. Accountability Theater: Do you use AI data to drive change, not just to “tick a box”? | ❑ | ❑ |

| Section 3: Non-Negotiables (The Red Lines) | YES | NO |

| 9. Final Decisions: Are all hiring, firing, and promotion calls made by a human? | ❑ | ❑ |

| 10. Unfiltered Reality: Do you “walk the floor” to get raw feedback, bypassing AI sentiment reports? | ❑ | ❑ |

| 11. Presence: Do you prioritize direct, synchronous engagement over AI-mediated communication? | ❑ | ❑ |

Scoring:

Section 1: Baseline capability (all must be “Yes”)

Section 2: Number of “No” = drift index. (Any “No” is an Operational Failure that must be corrected before scale.)

Section 3: Any “No” = integrity breach requiring immediate intervention.

Recommended “3-Step Reset” Action Plan:

1. The “Red Line” Declaration

Have leadership formally commit to the Non-Negotiables in Section 3.

Action: Publish a “Human-First Charter” stating: “We do not use AI to deliver consequential decisions. Responsibility remains human.” This creates immediate psychological safety for the team.

2. The “Bias & Counter-Argument” Ritual

To tackle the “Illusion of Clarity,” add an extra step to each strategy meeting.

Action: For every AI-generated proposal, assign one person to be the “Human Skeptic.” Their role is to challenge the recommendation and force justification before any decision is accepted. This moves the leader from a passive recipient to an active pilot.

3. The Quarterly “No” Audit

A simple check-and-balance system.

Action: Once a quarter, review any “No” answers from the assessment. If a “No” appears in Section 3 (Non-Negotiables), it triggers a mandatory “Leadership Reset.” The leader must re-engage directly with affected teams, restate standards, and reassume visible ownership of decisions.

DETAIL – Why Algorithms Can’t Lead

As leaders incorporate AI into their organizations, it’s essential to remember a crucial distinction: AI is a tool, while leadership is a responsibility. The risks associated with misusing AI, including eroding trust, evading accountability, and outsourcing judgment, lie squarely with leadership. The real question isn’t whether AI is powerful; it clearly is. The question is: at what point does wielding power without responsibility become a liability?

AI can accelerate thinking, but it cannot own consequences. That distinction defines the boundary.

⬜ What AI Cannot Do (Structural Limits)

1. Assume Accountability

AI cannot assume legal, moral, or reputational responsibility. It lacks agency, consciousness, and the ability to experience consequences. This is a fundamental limitation, not a temporary issue. AI cannot “face the employee afterward” or “absorb reputational risk.” It is a tool, not a substitute for human accountability.

2. Exercise Moral Judgment Under Uncertainty

AI can imitate ethical reasoning based on data and established rules. However, it cannot genuinely comprehend or handle the complexities of moral choices, particularly in situations with conflicting values or insufficient information. AI lacks subjective experience, empathy, and the capacity to grasp the human impact of decisions.

3. Hold Authority in Human Relationships

4. Create Real Accountability Loops

5. Model Integrity

Integrity is shown through actions and choices made under pressure. AI cannot choose between what is easy and what is right, nor can it experience pressure or make sacrifices. It can only reflect the integrity, or lack thereof, of its designers and users.

🟧 What AI Should Not Do (Misuse Risks)

1. Outsource Hard Conversations

Relying on AI for layoffs, performance warnings, or conflict resolution is generally considered poor leadership. It undermines trust and neglects the human responsibility essential to leadership.

2. Replace Judgment with Pattern Matching

AI is skilled at recognizing patterns and making predictions based on data, but effective leadership often requires breaking those patterns and making judgments that consider specific contexts, which data alone cannot capture.

3. Create the Illusion of Clarity

AI can produce clear, organized outputs, but clarity in language doesn’t necessarily imply clarity in thought, strategy, or accountability. Leaders must ensure that AI-generated outputs are critically assessed and owned by humans.

4. Enable Accountability Theater

Dashboards and reports may create the illusion of accountability, but without human enforcement and follow-through, they remain mere noise. This risk is well-documented in the field of organizational behavior.

🟥 What AI Must Not Do (Non-Negotiables)

1. Make Final Decisions That Impact People’s Lives

AI should provide information, not make decisions, particularly in critical areas such as hiring, firing, compensation, and disciplinary measures. This principle serves as both an ethical and practical guideline for responsible AI usage.

2. Act as a Proxy for Leadership Presence

Leadership presence, defined as being both physically and emotionally present, is irreplaceable. Interactions mediated by AI cannot substitute for human engagement in building trust and resolving conflict.

3. Define Reality Inside the Organization

Relying solely on AI summaries or filtered data can create a distorted view of organizational reality. Leaders must seek direct observation and unfiltered feedback to maintain an accurate understanding of their environment.

AI as Amplifier, Not Operator

AI is best used as an amplifier of human capabilities: increasing clarity, supporting consistency, and enhancing visibility. It should not replace human ownership, judgment, or accountability. AI is a tool to strengthen leadership systems, rather than replace them.

Bottom Line

Leadership can be defined as “ownership under consequence,” a quality that AI lacks. Although AI is a powerful tool, it cannot fulfill the role of a leader. There are valid risks associated with misusing AI to avoid discomfort, dilute accountability, or replace human judgment. These risks are well-documented in both academic and industry literature.